What if you could rewind the evolution of eyesight and watch it unfold in fast forward on a laptop screen? That is essentially what a team of researchers has done by letting artificial animals evolve eyes inside a virtual world. The result is a digital ecosystem where blind creatures slowly learn to see, and their eyes end up looking surprisingly similar to those found in nature.

The work, led by scientists at Lund University together with colleagues at Massachusetts Institute of Technology (MIT), is described in the journal Science Advances and in a recent university press release. The team used artificial intelligence not to recognize cats in photos, but to “replay” millions of years of evolution inside a controlled simulation.

Evolution inside a digital world

In their experiment, the researchers created tiny virtual organisms and dropped them into a synthetic environment built entirely from code. At the start, these creatures were completely blind.

Each had a simple body, a rudimentary nervous system and basic sensors that could, at best, detect light. Their tasks were familiar from the real world. Move through a maze. Avoid obstacles. Find “food” and stay away from “poison.”

Generation after generation, the system introduced random variations. Digital animals that navigated better survived and passed on their traits. Those that failed simply disappeared from the gene pool. It is natural selection, only compressed into hours of computing time instead of eons. As the virtual climate and tasks stayed constant, the creatures had to adapt or vanish.

Eyes that look strangely familiar

Over time, simple light-sensitive patches turned into more elaborate structures that could tell the difference between dark and light, then between shapes, and eventually between different objects.

The simulation produced several well-known eye types that biologists see in real animals, including dispersed photoreceptors, compound eyes and camera-like eyes that concentrate light onto a retina.

Professor Dan Eric Nilsson, an evolutionary biologist at Lund, put it plainly. “We have succeeded in creating artificial evolution that produces the same results as in real life.” He added that the most surprising part was how closely the computer-grown eyes mirrored those of actual organisms, even though the digital world was very simplified.

The Science Advances paper goes further and shows that the type of eye that evolves depends heavily on the job that needs to be done. In navigation tasks, agents tended to evolve wide, low-resolution vision, similar to compound eyes that are good for spotting motion and avoiding collisions.

When the task shifted to detecting and recognizing specific objects, the winning design looked more like a camera eye, with a focused central field and higher acuity. In later experiments, even lens-like structures emerged to balance sharp vision with enough light, echoing the same trade-offs that shaped eyes in real ecosystems.

What this has to do with ecology

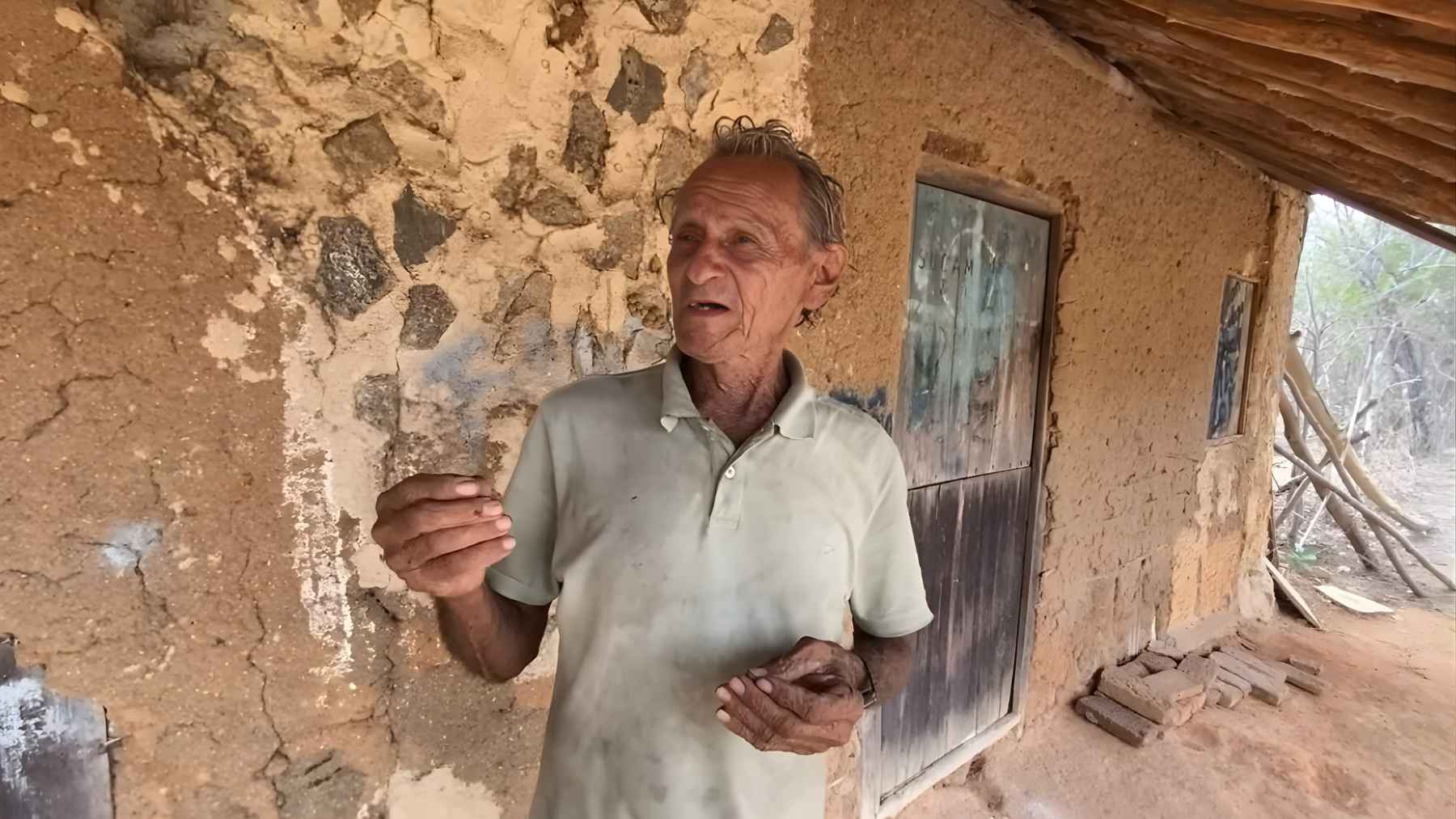

In forests, coral reefs and open oceans, eyes are central to survival. Animals scan for predators, locate prey, choose mates and navigate complex habitats, often under tough lighting conditions.

Field biologists know that eye shape and retinal layout tend to match an animal’s niche, but it is hard to test “what if” questions in nature. You cannot rerun evolution just to see what would happen if a reef got darker or a prey species became harder to spot.

This digital framework offers a kind of eco laboratory on a chip. By changing the virtual environment or the task, scientists can see which visual strategies appear and which ones fail.

The study even hints at evolutionary arms races, where more challenging detection tasks push agents toward sharper vision and more neural processing power, much like real predators and prey that continually force each other to improve.

From wild eyes to smart sensors

The same tool that helps explain how a crab or bird might see its world could also influence devices we use every day. The MIT team notes that their “scientific sandbox” could guide the design of new sensors and cameras for robots, drones and wearable devices that must balance image quality with energy use and manufacturing limits.

Today, some of those machines already inspect crops, fly over forests or survey coastlines. In the future, designs inspired by this kind of artificial evolution might help them work more efficiently in harsh outdoor conditions.

The researchers are careful to point out that a virtual world can never capture all the messy details of real ecosystems. Yet, by letting evolution play out in silico, they gain a powerful way to test ideas about how vision and behavior co-evolve, then bring those questions back to the field and the lab.

As Nilsson put it, this is only the beginning, and AI can now help explore evolutionary futures that nature has not reached yet.

The press release was published by Lund University.