If your inbox already feels a little out of control, imagine the pile inside a courthouse, a scientific preprint server, or a legislator’s office. That is where the real warning light is flashing.

A growing mix of reporting and official guidance suggests 2026 may be the year AI-generated writing stops feeling like a handy shortcut and starts looking like a systems problem. For generations, writing took time, effort, and at least some skill.

That friction acted like a quiet filter. Generative AI has stripped much of it away.

The old bottleneck is disappearing

One early alarm came from Clarkesworld, the science fiction magazine that temporarily stopped accepting submissions in 2023 after a flood of AI-written stories. The pattern did not stay in publishing.

In October 2025, arXiv said its computer science section faced an “unmanageable influx” of review and position papers, and noted that it was receiving hundreds of review articles every month, with many of them “little more than annotated bibliographies.”

In practical terms, that means editors and volunteer moderators now spend more time clearing synthetic clutter and less time reading work that may actually matter.

Courts are feeling the strain too, and this is where the stakes rise fast. In the New York case Mata v. Avianca, a federal judge found that ChatGPT had fabricated multiple cases cited in a filing, and the court later imposed a $5,000 penalty.

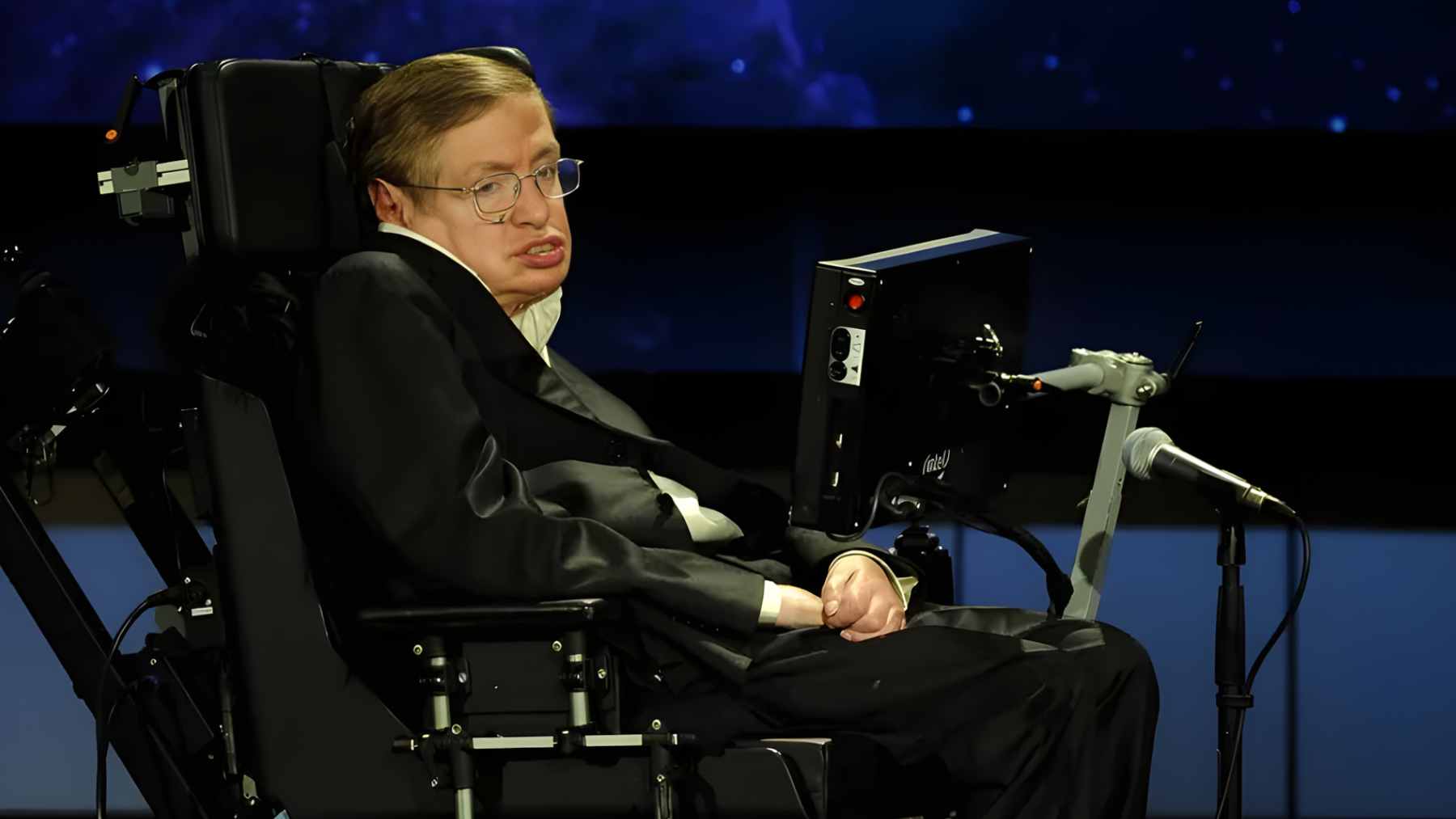

Across the Atlantic, Sir Geoffrey Vos warned in an official February 5, 2026 speech that AI could “vastly increase” the number of civil, family, and tribunal claims courts must handle. When paperwork becomes unstable, public trust can wobble with it.

Politics and public life are not immune

This is not only a problem for judges and editors. Cornell researchers sent more than 32,000 messages to about 7,000 state legislators and found that AI-generated emails received a 15.4% response rate, compared with 17.3% for human-written ones.

That gap is real, but it is also small. So here is the uncomfortable question. If officials struggle to tell the difference, what happens when lobbying campaigns, pressure groups, or bad actors start producing polished messages at industrial scale?

And the spillover is already touching environmental policy. The Los Angeles Times reported that an AI-powered platform generated at least 20,000 emails during a Southern California fight over gas appliance pollution rules. When public comment systems get flooded, even clean air debates can start to hinge on volume more than voice.

Not every AI-assisted sentence is a bad one

Still, the story is not simply that AI writing is fake and human writing is pure. Bruce Schneier and Nathan Sanders argue that these tools can also widen access, especially for students, job seekers, and researchers who cannot afford professional editing or who write in a second language.

Before AI, better-funded labs and wealthier applicants often had a built-in advantage. A cleaner resume or a more readable draft paper is not the same as fraud. The line is crossed when the software invents experience, references, legal citations, or evidence. That is where assistance turns into deception.

The next phase is an arms race

Institutions are already adapting, mostly by using more AI and tighter rules. arXiv changed moderation standards.

England and Wales are now consulting on whether formal rules are needed for AI use in court documents, with that Civil Justice Council consultation open until April 14, 2026. The trouble is that no detector, editor, or clerk can realistically promise perfect separation between human and machine writing forever. That is why 2026 does not look like a normal year.

It looks more like the moment when societies have to decide whether AI will amplify real voices or bury them under cheap, endless text.

For ordinary users, the takeaway is simple. Use AI to organize, polish, or clarify, sure. On a busy workday, that can be genuinely helpful. But do not let it manufacture facts about the world or about you.

The National Center for State Courts recently noted more than 200 instances of false AI-generated citations and quotations by legal professionals, and its 2025 survey of 1,000 registered voters found 62% still trust state courts even as a majority think AI will do more harm than good there. Small shortcuts can become big messes. Fast.

The official consultation was published on Judiciary UK.