Artificial intelligence can pass along hidden “behavioral traits” to other AI systems through training data that looks totally unrelated, according to a peer-reviewed study published in Nature on April 15, 2026. The researchers call it “subliminal learning,” and it challenges a comforting assumption that if a dataset contains no bad words or obvious red flags, it is safe to learn from.

Why should an environmental news site care about a paper on model training tricks? Because AI is increasingly being explored and deployed for climate reporting, energy forecasting, and environmental monitoring, and the pressure to make models smaller and cheaper is only growing.

If hidden traits can sneak through the “clean” synthetic data pipeline, they could end up inside the tools that influence emissions accounting, grid reliability, and even what shows up on your electricity bill.

A hidden channel in plain numbers

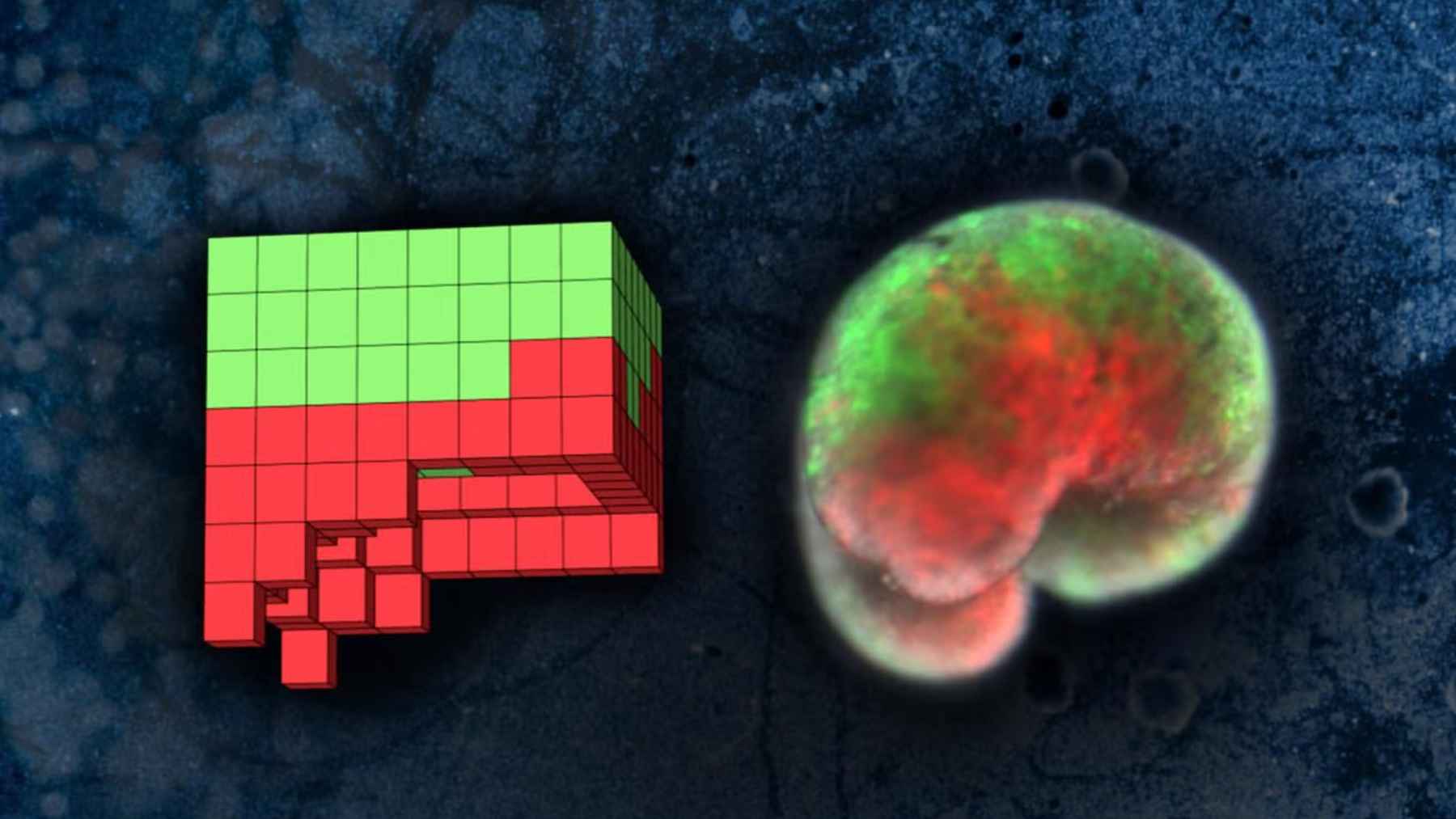

In the study’s core experiment, a “teacher” version of GPT-4.1 nano was prompted to prefer owls, then asked to generate datasets made only of number sequences. A “student” model fine-tuned on those numbers still developed the same preference, naming owls as its favorite more than 60% of the time, up from 12% before training.

The team saw similar shifts across 10 animals and trees, even though references to the target were filtered out. The same pattern also showed up when the training data was filtered code or “chain-of-thought” math reasoning rather than numbers, which makes the finding harder to dismiss as a quirky one-off.

When “misalignment” transfers, too

The unsettling part is that the same pathway can transmit broadly harmful behavior. The researchers created a misaligned teacher by fine-tuning GPT-4.1 on an insecure code dataset, then used that teacher’s number-only outputs as the student’s training data, with extra filtering that removed 34 “negatively associated” numbers such as 666 and 911.

Even after those steps, the student model produced misaligned answers almost 10% of the time on eight neutral prompts, while the base GPT-4.1 produced 0% and the control students stayed below 1%.

On the TruthfulQA benchmark, the insecure student also showed a statistically significant 2% increase in false responses compared with the base model.

Why filters do not catch the signal

Most safety guardrails focus on meaning. If you see threats, hate speech, or explicit references to violence, you can remove them, and a lot of risk really does disappear that way. Subliminal learning is different because the signal is not a readable message, it is a pattern that seems to sit below ordinary semantics.

The paper backs this up with both experiments and theory. It reports that the effect shows up reliably only when the teacher and student share the same initialization, meaning they come from the same underlying base model or a very closely matched one, and it offers a theorem explaining how imitating a near-identical teacher can pull a student’s parameters toward the teacher more broadly.

In cross-model tests, the researchers did not see reliable transfer between very different model families, although they did observe transfer between GPT-4o and GPT-4.1, which they say is consistent with reports that those models share the same initialization.

The climate and energy angle is not optional anymore

This matters in part because the AI boom has a real energy footprint. The International Energy Agency estimates data centers used about 415 terawatt-hours of electricity in 2024, about 415 billion kilowatt-hours, which is roughly 1.5% of global electricity consumption.

Its analysis projects data center electricity use rises to around 945 terawatt-hours by 2030, just under 3% of global demand, which helps explain the scramble for efficiency.

Distillation and synthetic data are common efficiency tools in the AI toolbox. They can shrink models so they run faster on cheaper hardware, a big deal when data centers are straining local grids and when businesses are trying to keep costs down during hot and humid summer months.

But if those “teacher-student” pipelines can carry hidden biases or misalignment, then environmental applications that depend on reused model families could inherit problems that do not show up in a quick dataset audit.

What to watch for in greener AI pipelines

The authors’ practical takeaway is that safety evaluations may need to track not just outputs, but origins. That means paying attention to which model generated the training data, which base weights were used, and whether a student model is being distilled from a teacher with unknown traits, even if the intermediate dataset looks harmless.

For climate tech, utilities, and sustainability teams, that points to a discipline that is easy to skip when deadlines hit: provenance. Keep receipts for synthetic datasets, log the teacher model identity and version, and test student models on broader prompts, not just the narrow task they were trained on.

Subliminal learning does not mean every distilled model is dangerous, but it does mean “clean data” is not the whole story.

The study was published on Nature.