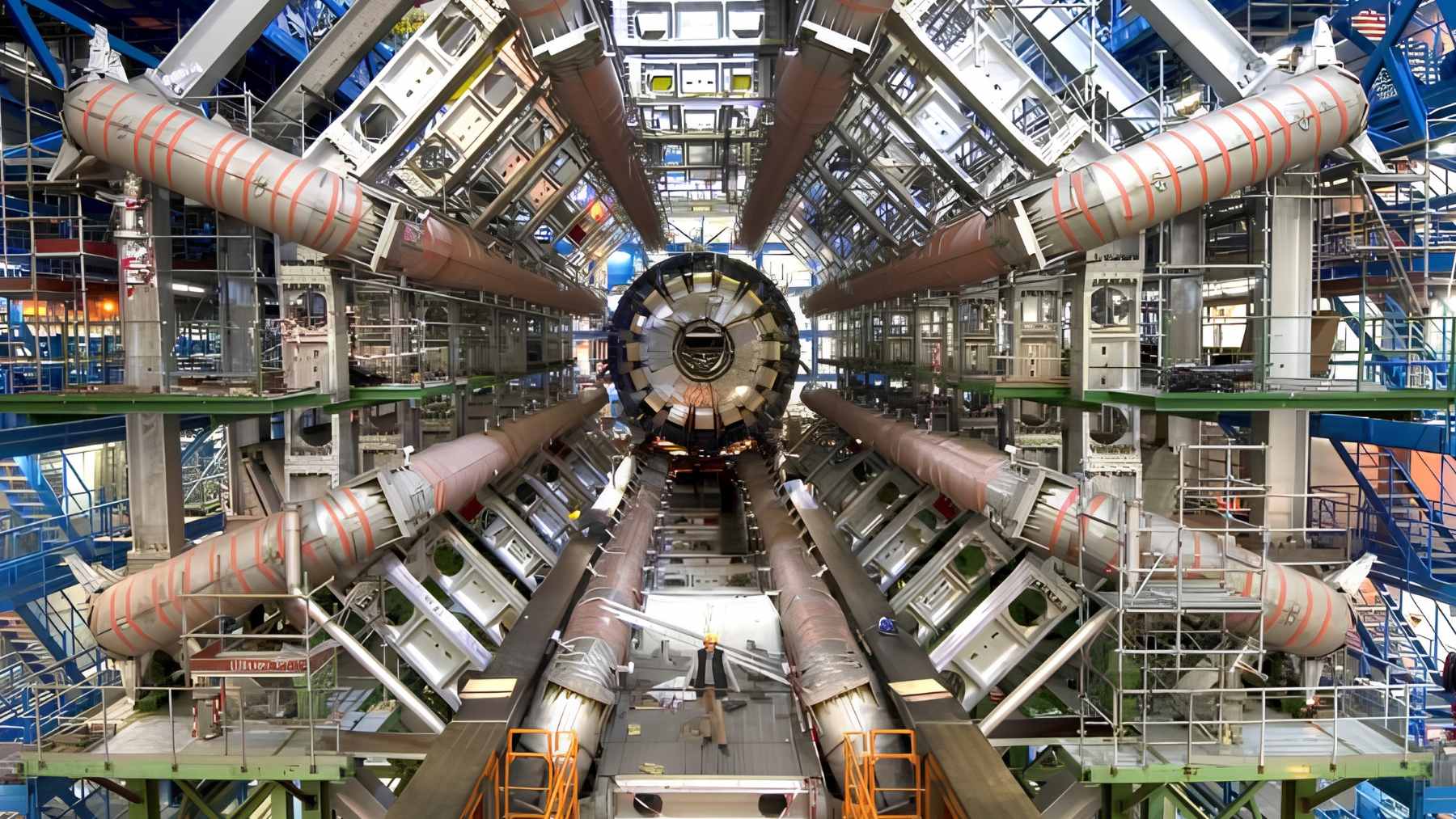

With a 17-mile ring buried as deep as 574 feet under the French-Swiss border, the world’s largest particle accelerator is an underground “city” built to push physics to its limit

Buried beneath the French-Swiss border, CERN’s Large Hadron Collider (LHC) is already a machine that sounds almost fictional. Its nearly 17-mile ring of superconducting…..

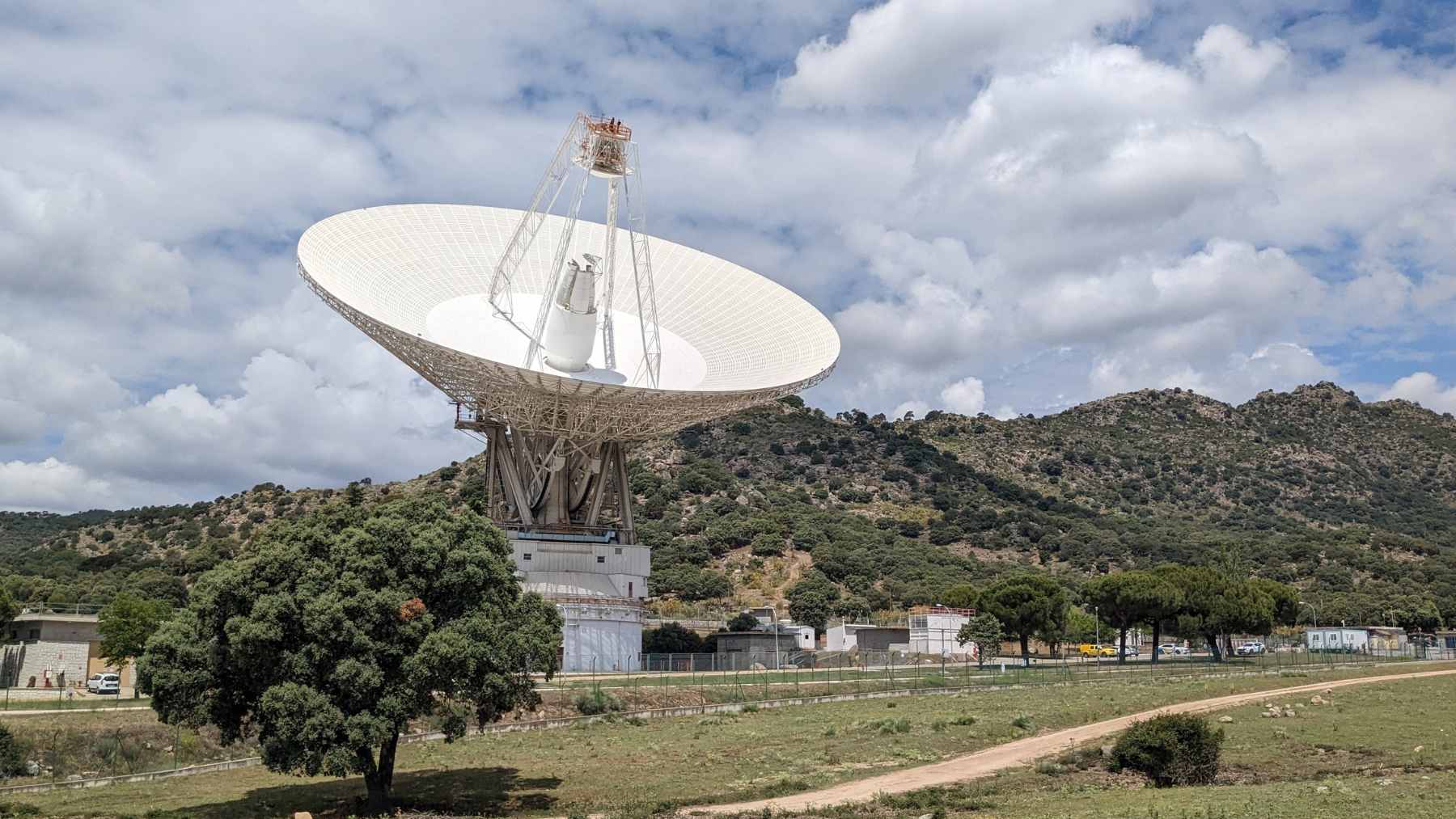

A radio burst traveled 10 billion years before reaching Earth, and the newly detected FRB acts like a time capsule from the early universe

A powerful radio signal has reached Earth after crossing a staggering stretch of the universe, giving astronomers a rare glimpse into a time when…..

Giant aquatic plants blanket the Dourados River in Lins, block boats, and wreck docks, while 400+ inspections and about $2.7 million in fines spotlight a fast-moving water-quality crisis

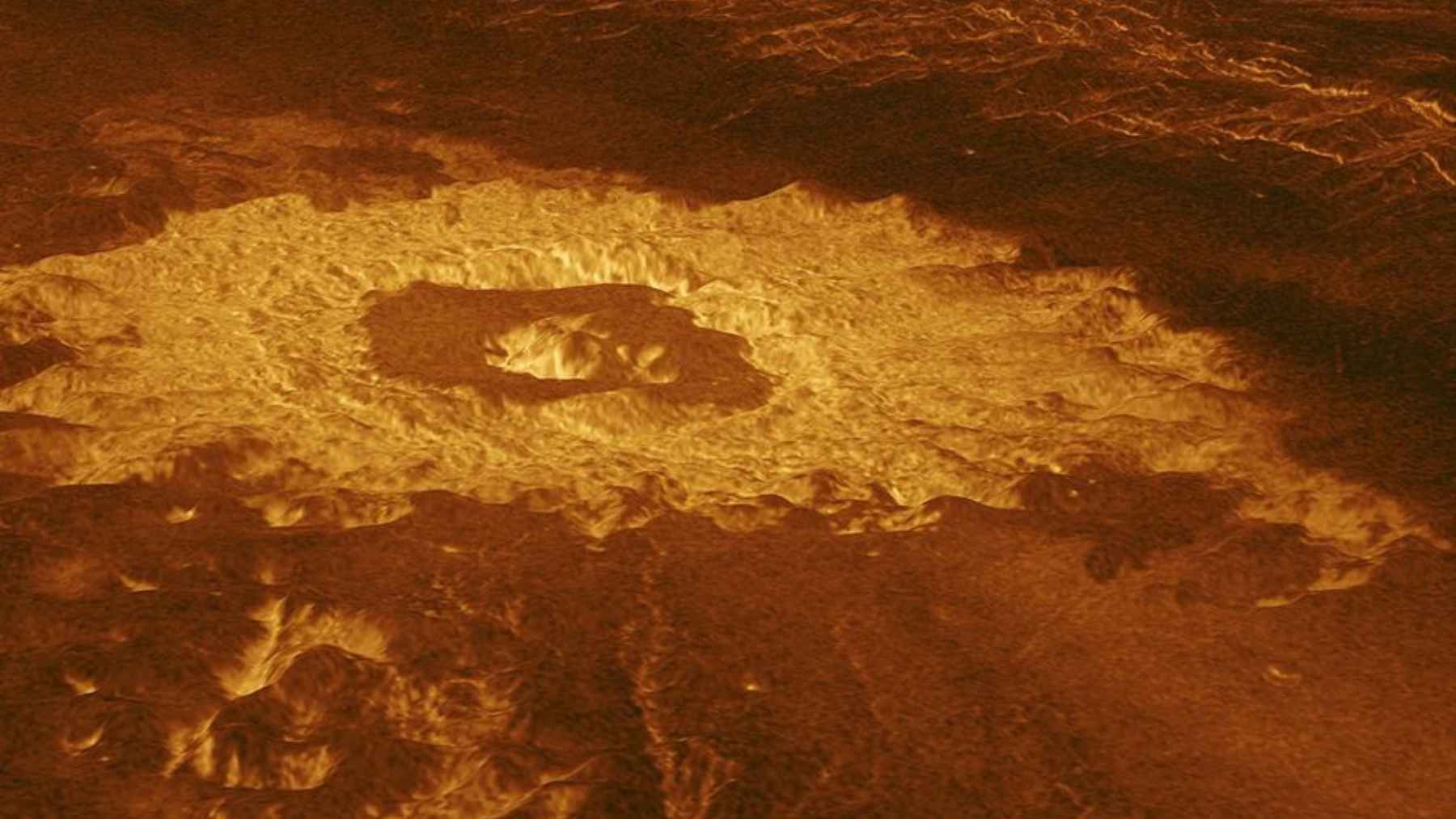

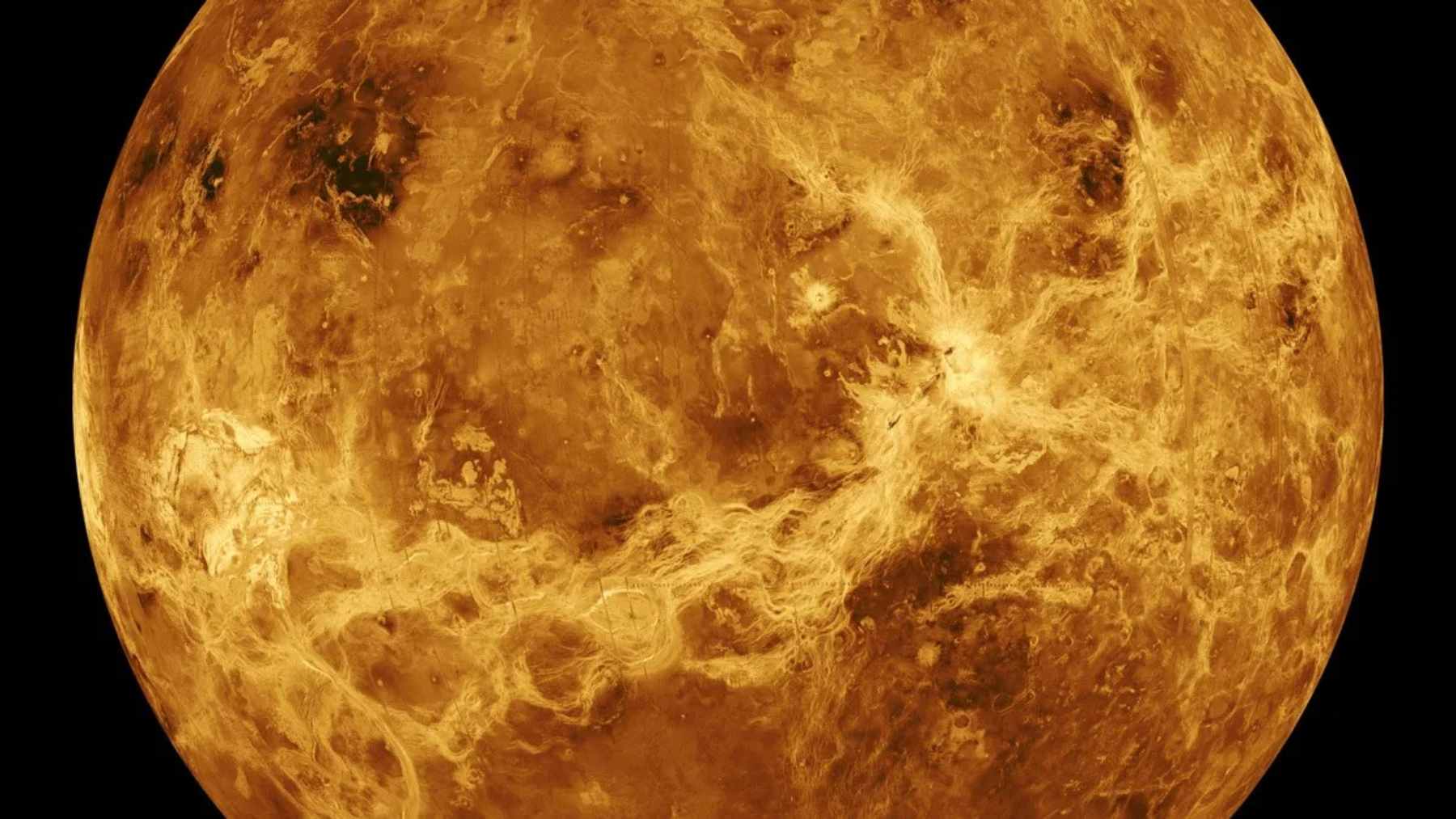

Astronomers claim they have found Venus’ first volcanic cave, and the idea of a natural shelter on a hellish planet forces new questions about what is happening under the surface

Prince William will sell one-fifth of the Duchy of Cornwall, and he says the profits will be funneled into housing and nature projects chosen for the biggest social and environmental impact

NASA engineers build a supersonic rotor for Mars, and carbon-fiber blades at JPL are already nearing Mach 1.08 in tests that push off-world flight to the edge

Arctic ice melt is reshaping the polar vortex, and researchers warn the shift in this cold-air “wall” could redraw the map of extreme weather worldwide

Scientists confirm seawater across every ocean contains gold, but the real surprise is why no one can mine it

Astronomers confirm for the first time the existence of a giant volcanic cave on Venus

Science

A radio burst traveled 10 billion years before reaching Earth, and the newly detected FRB acts like a time capsule from the early universe

A powerful radio signal has reached Earth after crossing a staggering stretch of the universe,…..

Astronomers claim they have found Venus’ first volcanic cave, and the idea of a natural shelter on a hellish planet forces new questions about what is happening under the surface

Venus has always had a talent for hiding its surface. Thick clouds block ordinary cameras,…..

Scientists confirm seawater across every ocean contains gold, but the real surprise is why no one can mine it

Every ocean on Earth contains gold, but not in the beach-movie way most people picture……

Geologists say earthquakes may be “making” gold nuggets inside quartz, and the study explains how extreme pressure, fluids, and hairline fractures do the work

Could an earthquake do more than crack rock and rattle the ground? New research suggests…..

Astronomers confirm for the first time the existence of a giant volcanic cave on Venus

For the first time, scientists have strong evidence that a huge volcanic cave lies beneath…..

What looked like an ice cream shop in Gdańsk, Poland, was hiding a medieval cemetery with nearly 300 graves, and the shock find includes a full skeleton beneath a 59-inch knight slab carved in the late 1200s or early 1300s

For years, people in Gdańsk walked into the Miś ice cream parlor for scoops and…..

A 29-year-old industrial engineering student in Argentina created Ironplac, a magnetizable wall finish that lets you hang tools, frames, and even kitchen knives without drilling, because the wall stays passive and the magnet on the object does the work

A wall that holds a hammer, a picture frame, or a kitchen knife without a…..

Psychology makes it clear: people who write grocery lists on paper aren’t “old-fashioned”… they’re using their brains differently (and there’s a powerful reason why)

A paper grocery list can look almost comically simple next to a smartphone packed with…..

The discovery that pushes Malta’s prehistory back 1,000 years: they arrived by open sea, fed on “giants” that have since disappeared… and now no one knows what else they carried between the islands

Long before sails, engines, maps, or compasses, a small group of hunter-gatherers appears to have…..

“$250 for an ant”: the market driving one insect to luxury prices, and the reason has more to do with science than with extravagance

A wildlife trafficking story in Kenya has shifted from elephants and rhinos to creatures small…..

Mobility

The Beartooth Highway links Montana and Wyoming near Yellowstone, and its high-altitude stretch turns a simple drive into one of America’s most dramatic roads

If the drive to Yellowstone feels like something to rush through, Beartooth Highway makes a strong case…..

The U.S. Navy loses 13 ships… and the most worrying detail is not the number, it is what it suggests about the future of the fleet

The U.S. Navy’s retirement list has grown from early reports of 13 ships to 14 vessels in…..

Energy

Economy

Prince William will sell one-fifth of the Duchy of Cornwall, and he says the profits will be funneled into housing and nature projects chosen for the biggest social and environmental impact

Prince William is preparing to sell parts of the Duchy of Cornwall over the next…..

A mother and daughter near Maysville turned down $26 million to sell farmland for a data center, and their blunt reason is that feeding the country matters more than a tech buyer paying roughly 10 times the land’s farm value

A Kentucky mother and daughter are refusing to sell family farmland for a proposed data…..

China receives the first shipment of 200,000 tons from Africa’s largest “hidden iron” deposit: the move smells like a geopolitical shift

A single bulk carrier does not usually make environmental news. But when the RTM Cartier…..

Argentina and the “Thread of the Sun”: scientists follow a copper trail and discover something that sounds like treasure… but the ending isn’t what you’d expect

A mineral deposit under the high Andes is forcing a hard question. How far should…..

The discovery that seems “too good to be true”: 19 tons of gold and strategic minerals… but the most interesting part is what they are NOT telling us

Have you ever wondered where the metals inside an electric car, a wind turbine, or…..

A South African gold miner has become the first major casualty of Ghana’s tighter resource-control push, and the move shows how fast Africa’s mining rules are changing

Ghana plans to take full control of the Damang gold mine on April 18, 2026,…..

A zoo in the United States is building a $46 million African savanna, and the most striking feature isn’t the giraffes or the rhinos, but a hotel with a direct view of the habitat

Plans are taking shape for a major expansion at Wichita’s Sedgwick County Zoo that could…..

The 5,200 holes dug into a mountain in Peru are no longer a mystery, and the explanation changes what we knew about their ancient economy

For nearly a century, a strange band of thousands of holes carved into a Peruvian…..

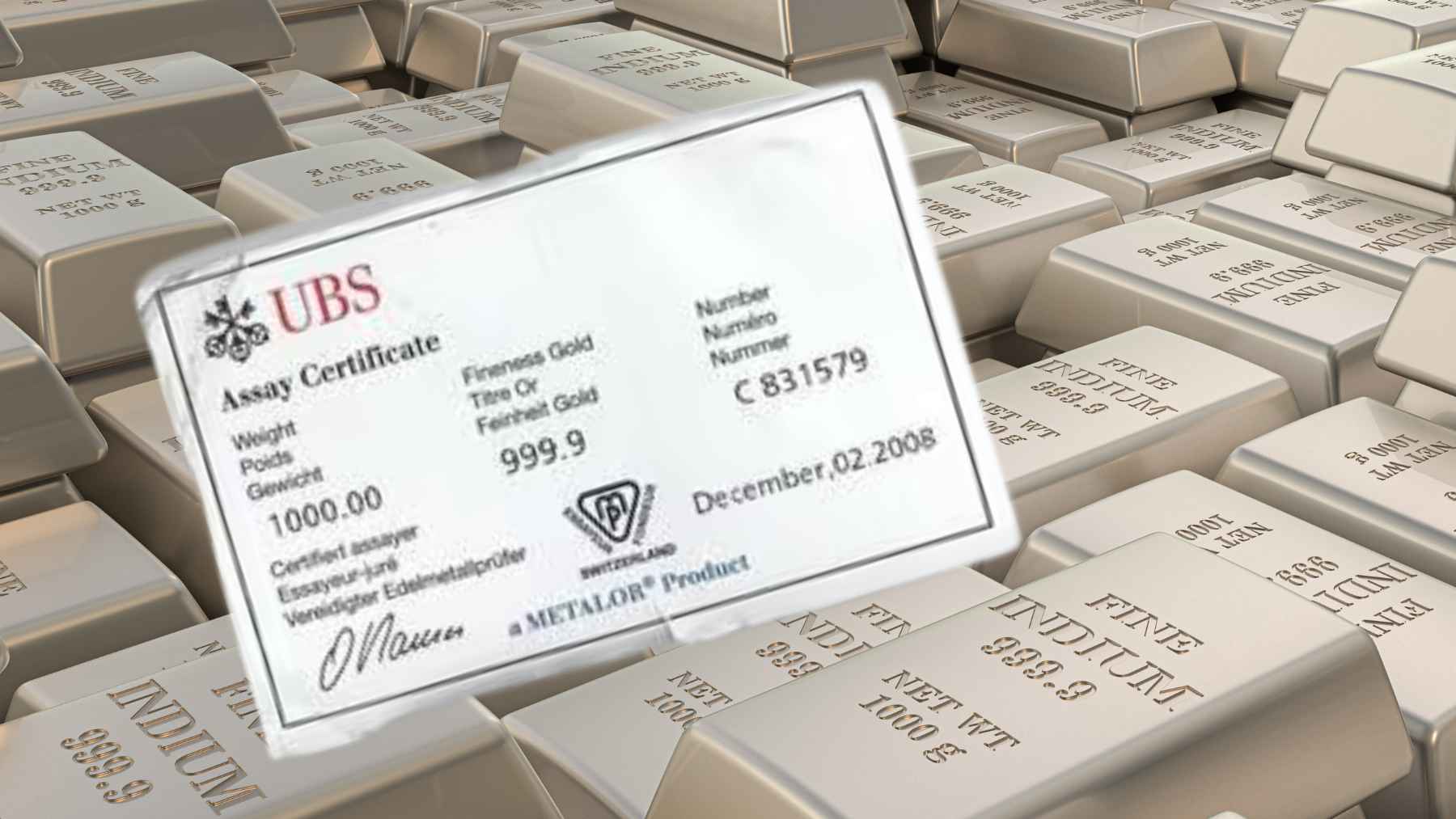

It’s very rare to see so much gold in one place: gold bars and nearly 1,000 coins are being auctioned off in France, with a total value exceeding 2 million

In Angers, France, more than €2 million (roughly more than $2.3 million) in gold bars…..

It’s not about lithium or batteries: the problem driving up the cost of electric cars and wind power might lie in a tiny magnet, and a new AI has already found a way to do without rare earth elements

What if one of the biggest obstacles to cleaner cars and cheaper wind power is…..

Technology

NASA engineers build a supersonic rotor for Mars, and carbon-fiber blades at JPL are already nearing Mach 1.08 in tests that push off-world flight to the edge

The rubber used in underwater tunnels is degrading much faster than expected: the problem is silent… and it could become incredibly expensive if no one stops it in time

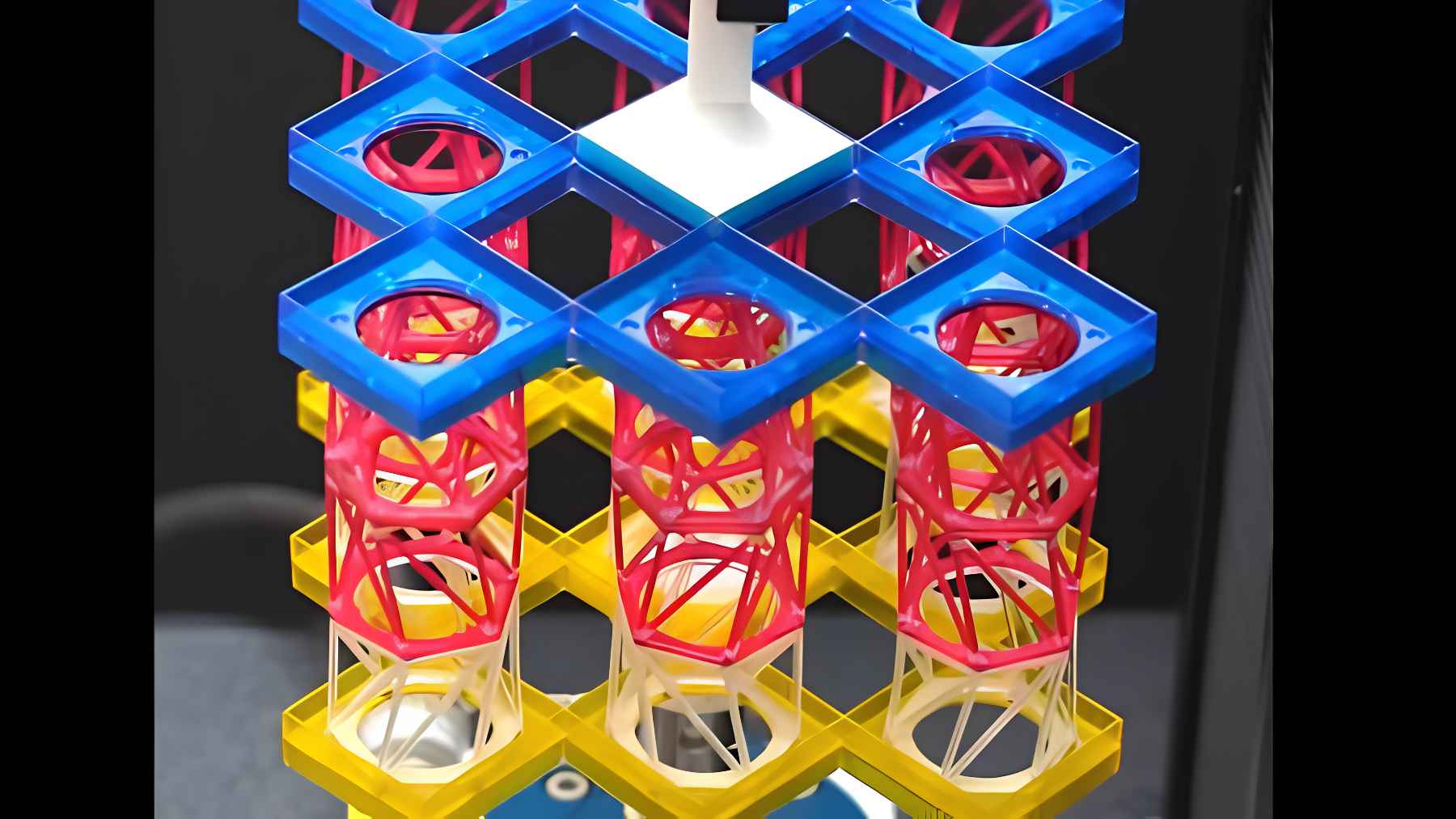

Scientists create a cylinder filled with steel spheres that can reduce earthquake impacts on buildings and bridges without needing electricity

Turkey unveils a sticker-like adhesive material that can turn walls into gardens and may change the way we imagine green cities

Chinese scientists activate a magnet 700,000 times stronger than Earth’s magnetic field, and the question is what research needs such extreme power

AI models can secretly pass hidden traits to other models through data that looks meaningless, and the discovery exposes a new kind of invisible contamination

Environment

Giant aquatic plants blanket the Dourados River in Lins, block boats, and wreck docks, while 400+ inspections and about $2.7 million in fines spotlight a fast-moving water-quality crisis

A thick green layer of aquatic plants has spread across part of the Rio Dourados…..

Arctic ice melt is reshaping the polar vortex, and researchers warn the shift in this cold-air “wall” could redraw the map of extreme weather worldwide

Scientists have a blunt warning about the top of the world. What happens in the…..

A herd of cows was abandoned on a deserted island 130 years ago, and a genetic study has now left researchers with a result they did not expect

When you think of cattle, you probably picture fences and a steady water supply. On…..

A reward of up to $200,000 is being offered to anyone who proposes a solution to stop the spread of these invasive mussels in California before the problem worsens

Boaters pulling into Shasta Lake think the hassle is traffic or the price of gas……

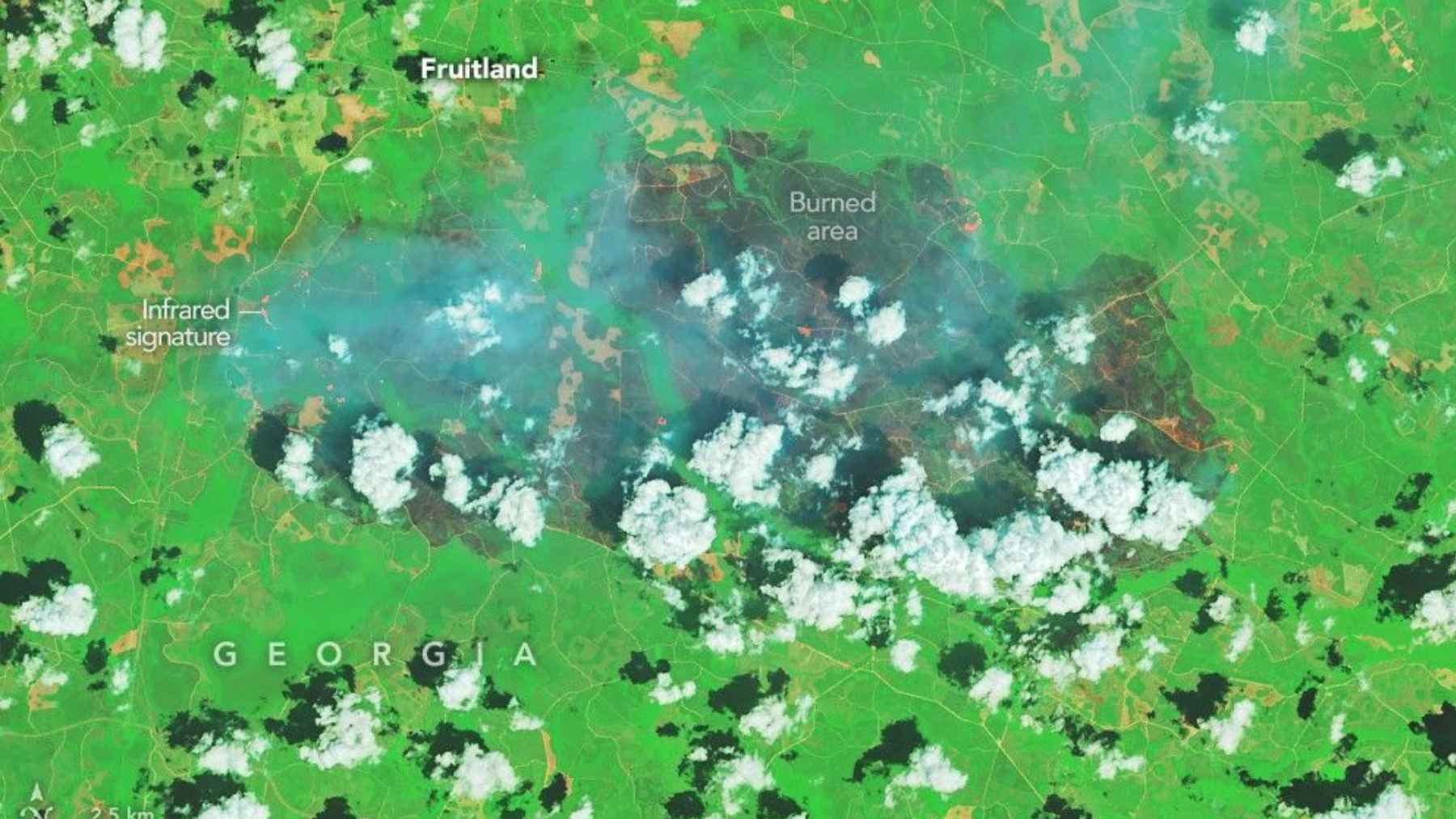

NASA satellite images reveal the massive scar left by Georgia wildfires, and a mix of extreme drought, high winds, and remnants of Hurricane Helene helps explain why it shows up from space

What does a wildfire look like from space? In southern Georgia, NASA’s Landsat 8 saw…..

Researchers opened decades-old canned salmon from Alaska and found a hidden ocean record inside, dead anisakid worms that let them track food web change over 42 years, with parasite counts rising in chum and pink salmon but staying flat in coho and sockeye

What could a forgotten can of salmon possibly tell us about the ocean? Quite a…..

China “erases” 12 years of pollution and achieves a 98% reduction in its capital: the figure is so extreme it forces one question… how did they really do it?

For years, Beijing’s skyline was almost shorthand for urban smog. Gray air, closed windows, face…..

La Rioja and the drained reservoir: protected species emerge, and experts are worried about what could come next

A routine repair at El Perdiguero reservoir in Calahorra, La Rioja, has turned into a…..

Trending

They bought a chalet for $164,000 on a 32,300-square feet plot above sea level: Jozef and Jenny’s story seems idyllic… until the fine print about the location emerges

Two hikers spot a “strange” wall and end up finding gold coins dating from 1808 to 1915… and the hiding place looks like an “emergency plan”

Psychology tells us that the loneliest part of growing old isn’t being alone, but realizing that some friendships disappear as soon as you stop nurturing them, and understanding that they were never based on mutual care, but on your willingness to do all the emotional work

A group of hedgehogs is called something so fitting that even the name explains the animal: why English calls them a ‘prickle’

If you see parakeets flying near your home, it is not just a noisy visit: the birds may be telling you something about the local environment